With another article published lauding the Estonian education system, we thought it timely to...

Blog

The challenge of using AI in schools

Authored by John Snell, Open Minds Education Expert, & UK Head Teacher and Education...

Stop Mandated Shunning: announcement

As a board member of the "Open Minds Foundation”, I am co-founding the "Stop Mandated...

Developing critical thinking in primary schools, with the Open Minds Foundation

Victoria Petkovic-Short, Executive Director for the Open Minds Foundation leads a short webinar...

Developing critical thinking through play

In the UK, children aged between 4 and 11 typically spend around 32.5 hours a week in school. Much...

How individuals can avoid sharing mis- and disinformation

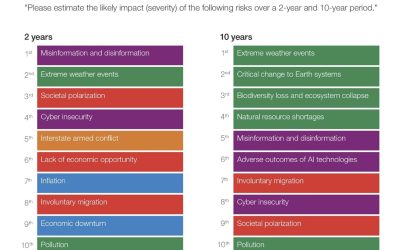

According to the World Economic Forum’s Global Risks Report 2024, misinformation and...

The Martyr Complex and Conspiracy Theorists

A narcissistic martyr is motivated in their excessively sacrificial actions by their desire for praise, admiration, and gratitude.

The global risk of misinformation and disinformation

According to the World Economic Forum’s Global Risks Report 2024, misinformation and...

How to SCAMPER

A 2017 study published by Stanford found that people typically fall into two groups – vertical...

November news recap

The trending topics in recent weeks put disinformation front and centre, especially in the context...

The importance of debate for critical thinking

Critical thinking is a key skill that should be taught to, and refreshed in, people of all ages....

The impact of social media on critical thinking

Social media can be a wonderful thing, but it can also be a tool used to spread misinformation and...

Inversion: the critical thinking style that can drive fresher idea generation.

Critical thinking is an ever-evolving discipline and inversion is a style of thinking that can...

The Importance of School Values

Authored by John Snell, Open Minds Education Expert, & UK Head Teacher and Education...

Is Meta doing enough to protect WhatsApp users against misinformation?

While credible news posts tend to trickle onto timelines in a steady stream, studies show...

Misinformation Susceptibility Test

Psychologists at the University of Cambridge have developed the first validated “misinformation...

Developing Rules for Positive Behaviour in Primary Schools

Authored by John Snell, Open Minds Education Expert, & UK Head Teacher and Education...

April news recap

AI platforms, the impacts of misinformation, and geopolitical tensions have been the trending...

Open Minds Foundation: progress update

In the last six weeks, we've made two significant leaps in bringing our critical thinking...

Narrative laundering: the new face of disinformation

Narrative laundering has become the latest tool for spreading disinformation, seeking to sow...

Report highlights AI is extremely fallible

A new report from the Center for Countering Digital Hate (CCDH) has found that AI Chatbots can be...

March news recap

For the last few weeks, fake news, misinformation, and disinformation have been heavily featured...

The power of prebunking against coercive control

In the fight to improve critical thinking, organizations are highlighting the value of...

How to dodge fake news…

Fake news is a problem. It pervades our consumption of media, and we live in a society that...

February News Recap

Technology topped the charts of news in early 2023, with the grand reveal of Artificial...

We all need media literacy, more than ever!

50 years ago, “media” comprised the regular newspapers as well as selected radio and TV...

Thinking outside the box – developing deeper thinking in schools

Authored by John Snell, Open Minds Education Expert, & UK Head Teacher and Education...

Lower ability in the classroom – fact or fiction?

Authored by John Snell, Open Minds Education Expert Having worked in schools for over 25 years,...

Are algorithms affecting how we think?

It is no secret that the majority of modern media platforms exist and operate thanks to...

Art or lies? Does television contribute to misinformation?

One television programme coming under fire currently is The Crown on Netflix, which taps into a...

Is modern media killing critical thinking?

Thanks to the marvels of modern technology, we can now see exactly how many hours and minutes we...

Supporting children with their wellbeing

Authored by John Snell, Open Minds Education Expert There is a wealth of information and data...

Tackling the rising tide of misinformation

In a world where the 2016 Oxford Dictionaries word of the year was “post-truth” pertaining to...

Critical thinking: simple strategies promise significant gains

[Opinion piece] There is growing research supporting not only the personal value of improved...

The power of lateral reading

How we consume and read content has changed, in large part because the way that content is...

A license to kill people socially

Authored by Patrick Haeck [This blog provides an update to Patrick's previous experience in a...

The meaning between the words

When considering our tendency to “Truth Bias”, it is no surprise that what people say, and how the...

The role of “illusory truth” in misinformation

Building on the notion of truth bias, the illusory truth effect (ITE) demonstrates the crucialness...

Why we believe in truth and honesty, even when we shouldn’t

There is a phenomenon called “truth bias” which leads us to believe people are telling us the...

Jehovah’s Witnesses appeal: shunning is a crime

Following success in the courts of Belgium in getting religious shunning recognised as a crime, a...

Critical thinking: is Estonia offering a model education system?

Authored by Educational Leader John Snell, UK Headteacher, & advisor to the Open Minds...

Do you know the signs of gaslighting?

If a friend, colleague, or family member starts to change their behaviour, then it could be a sign...

White Supremacists on Trial: racial radicalisation in the US

On 11 August 2017 the “Unite the Right” white supremacist rally began in Charlottesville,...

That statistic: is it true?

Authored by Victoria Petkovic-Short When you see a claim backed up by statistics, how do you know...

The problem with statistics…

A statistic is defined as “a fact or piece of data obtained from a study of a large quantity of...

The key to eliminating coercion, lies in critical thinking

Author: Richard Kelly | January 2022 The mission for the Open Minds Foundation is “to create...

Critical thinking: a practical group exercise for the classroom

Tackling the challenge of classroom groupthink One of the core challenges of working with young...

Coercion at its Worst: Religious Mandated SHUNNING

By Patrick Haeck | January 2022 One of the most onerous forms of coercive control is mandated...

What is confirmation bias?

Confirmation bias (also referred to as myside bias) is a broad term encompassing a number of...

Combatting misinformation with the rule of five

Hands up who has a favourite news resource that they refer to? It could be a news website, a...

Critical Thinking in Primary Schools

Authored by John Snell Developing critical thinking skills in primary schools can appear to be a...

The power of propaganda and how to fight it

Under scrutiny until at least January 2022, the oldest defendant to stand trial so far will be...

What is groupthink?

Groupthink occurs when a group of people reach full consensus on an issue or decision, without...

Welcome to Open Minds 2.0

Following our very gentle relaunch last week, we’ve been overwhelmed with the response, comments,...

Critical Thinking and Meaning while Dialoging with Groups Like Jehovah’s Witnesses

Article supplied by Robert Compton | June 2021 In this paper I want to reflect upon how we use...

Manipulation at its Best (or Worst)

Few groups manipulate their members to act against their own best interests more effectively than...

Teaching Teenage Students about Undue Influence

We are always on the lookout for best practice examples of educating teenage students about the...

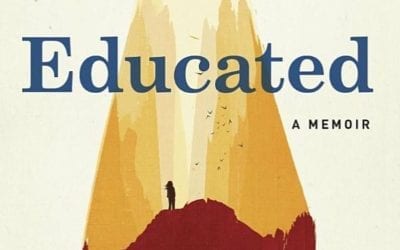

Nothing but PRAISE for Educated

The New York Times Book Review proclaims, “Heart-wrenching…. A beautiful testament to the power of education to open eyes and change lives.”

Questioning our Unquestionable Assumptions

If we can be patient with our feelings of unease, and learn to question our assumptions, no matter...

15 Years Later – Undoing the Undue Influence

I was a Jehovah’s Witness from childhood to my late twenties. I officially disassociated myself...

The Overwhelmed Brain

I read Oliver Sacks’ seminal The Man Who Mistook his Wife for a Hat in 1984. But, twenty or so...

Cognitive Dissonance, Revisited

Cognitive dissonance is perhaps the most fundamental concept for an understanding of undue...

The long history of undue influence

Undue influence has been recognized in law for more than 500 years. It is a legitimate legal...

When the Alarm Sounds

Imagine you are at work. You are sitting at your desk, engrossed in a task. Suddenly the fire...

Building a Life after Leaving a High-Control Religion

Amber Scorah’s “Leaving The Witness” A good writer can hook and hold you from start to finish with...

The Journey of the Lost

Many people leaving cults or high-pressure groups understandably say they feel lost. How could...

Cognitive Dissonance at Work

Knowing how cognitive dissonance works is the key to understanding why the human brain is so...

Potential Recruits for Authoritarian Groups

There is a general belief that only weak people are taken in by authoritarian groups, but this...

The Process of Undue Influence

The process of undue influence follows a predictable series of steps. First comes contact. This...

No Safety in Numbers with Undue Influence

Any examination of history shows that people can be brought to believe almost anything. So,...

Our Susceptibility to Undue Influence

It is not just the Internet that is rife with scams. Trickery is an aspect of human nature, and it...

How Easy is it to Trick the Brain?

Psychological studies have repeatedly shown how easily rational thinking is bypassed. Our...

Conformity

Open Minds would like to give a shout-out to bestselling author Cass R. Sunstein and his new book,...

Faith-based Medical Neglect and Undue Influence

With more than 8,000,000 purported members, it is likely Jehovah's Witnesses are the foremost...

The Paradox of Undue Influence

The great problem with undue influence is that it has a before and an after, but no during. While...

Are You Being Misled Online?

Have you ever been misled online? If so, you are not alone! It’s not easy to discern fact from...

Developing Your Own Fact Detector

"Fake news" is one of the biggest concerns of our time; and with good reason. If people can't get...

News: Fake or Real? Try the Scientific Approach

As you scroll through your social media wall you encounter an article someone shares that sends...

Ukraine is Educating Students to Identify Fake News and Propaganda

Learn to Discern - Media Literacy Training On March 22, 2019, NPR published this article,...

Techniques Used by Authoritarian Groups – New Mini-poster

Manipulative, authoritarian groups can be found in many varieties - from destructive religious...

Combating Cult Mind Control – The #1 Best-Selling Guide to Protection, Rescue and Recovery from Destructive Cults

If you're reading CCMC for the first time, please know that you've found a safe, respectful,...

Terror, Love and Brainwashing – Attachment in Cults and Totalitarian Systems

Written by a cult survivor and renowned expert on cults and totalitarianism, Terror, Love and...

Inside Out – A Memoir of Entering Into and Breaking Out of a Minneapolis Political Cult

A gripping literary memoir of life inside an extremist political group. "If you want to know how...

Opening Minds – the Secret World of Manipulation, Undue Influence and Brainwashing

Opening Minds by Jon Atack shows how we can be cajoled into accepting unethical, uninvited and...

Let’s Sell These People a Piece of Blue Sky

Let’s Sell These People a Piece of Blue Sky: the new, unexpurgated, unabridged version of the...

Foucault’s Hair – Part Two: Cults and Multiculturalism

The reduction in slavery, exploitation, abuse, humiliation, and physical and psychological...

Foucault’s Hair – Part One: Two Frenchmen

If I said that the thing I most appreciate about Michel Foucault is his hairstyle, surely someone...

In Loving Memory of Laura Ann Gracey – A Father Remembers

My darling daughter Laura Ann Gracey was born on July 17th, 1972, in Orange, California. My wife...

Saving a Thousand Lives a Year: Reforming Watchtower’s Policy on Blood – Part Two

The Watchtower strikes back To counter the mounting criticism concerning internal judicial action...

Saving a Thousand Lives a Year: Reforming Watchtower’s Policy on Blood – Part One

A brief history of Advocates for Jehovah's Witness Reform on Blood (AJWRB) and Watchtower’s Blood...

Fifth: “Thou shalt not kill” – The dark side of faith

You're walking along the railroad tracks when you see a runaway trolley barreling down. It is...

Cult, Gang, or New Religious Movement?

The Open Minds Foundation is not a counter-cult group, though totalist cults are part of our...